Hashing

Hashing is a process that transforms an arbitrary piece of data into a fixed-size value, typically an integer. In the context of system design, this arbitrary data could be an IP address, username, HTTP request, or anything that can be hashed or transformed into an integer value. Hashing plays an essential role in various aspects of system design.

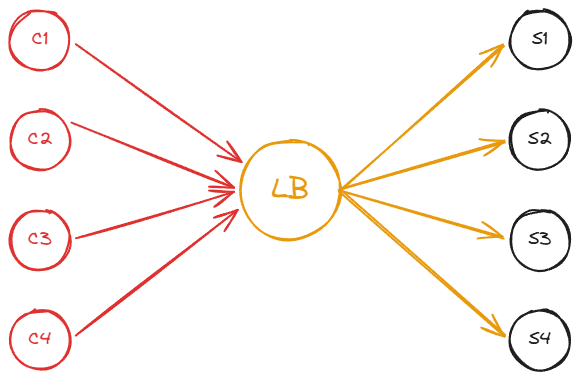

How can hashing be used in load balancers? Imagine that we have four clients, four servers (each with its own cache), and a load balancer that distributes the load. The requests that clients issue are computationally expensive, meaning they involve significant resources and time to complete.

Without the right selection strategy, the load balancer may direct requests to a server that doesn’t have the necessary cached data. This is where hashing comes into play.

By hashing the incoming requests, we can assign them to specific servers based on the hash value. In this example, we’ll hash the client names (C1, C2, C3, C4) to distribute the requests evenly across the servers.

Let’s assume we use a hash function that returns the following results for each client name:

1

2

3

4

hash("C1") -> 11

hash("C2") -> 12

hash("C3") -> 13

hash("C4") -> 14

Now we can use the modulo operation to map these hashes to the servers (in this case, four servers):

1

2

3

4

11 % 4 = 3

12 % 4 = 0

13 % 4 = 1

14 % 4 = 2

This means that C1 should be associated with server D, C2 with server A, C3 with server B, and C4 with server C.

Importance of a good hashing function

A good hashing function should provide uniformity, ensuring that clients are evenly distributed among the servers. This doesn’t mean that two clients won’t map to the same server, but it does mean that a well-designed hashing function should distribute data values evenly.

It is important to distinguish between cryptographic and non-cryptographic hash functions, because they serve very different purposes:

- Cryptographic hash functions (SHA-256, SHA-3) are designed for security: they resist collisions, preimage attacks, and tampering. Use these when integrity or authentication matters, for example, verifying file checksums or digital signatures.

- Non-cryptographic hash functions (MurmurHash3, xxHash) are designed for speed and uniform distribution. They are the right choice for load balancing, hash tables, and consistent hashing, where performance matters far more than cryptographic security.

A common mistake I see is recommending MD5 or SHA-1 for hashing in system design contexts. Both have been cryptographically broken for years, MD5 since 2004 and SHA-1 since 2017. For load balancing and request routing, you don’t need a cryptographic hash at all. MurmurHash3 or xxHash will give you excellent distribution with significantly better performance. If you do need a cryptographic hash (for example, verifying data integrity across nodes), use SHA-256.

By using hashing, we maximize the chance of getting cache hits, as all requests from a particular client will always be directed to the same server.

Handling changes in server count

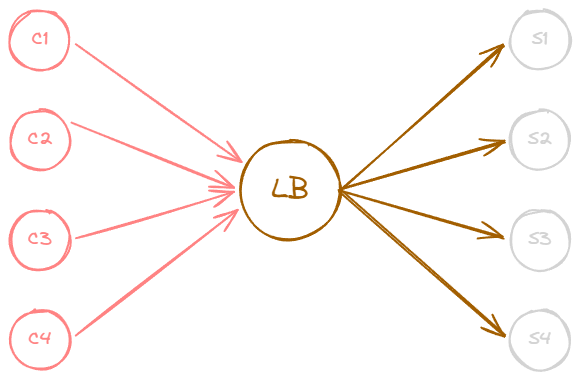

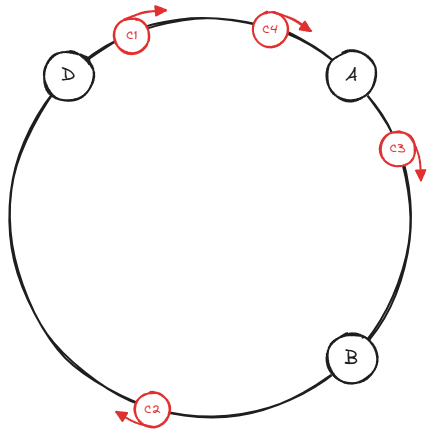

However, in distributed systems, issues like server failures or the need to add new servers can arise. If a server dies or if we need to add a new server, we must adjust our hashing strategy accordingly.

For example, if we add a fifth server (E), we can no longer mod by four; we must mod by five:

1

2

3

4

11 % 5 -> 1 (was 3)

12 % 5 -> 2 (was 0)

13 % 5 -> 3 (was 1)

14 % 5 -> 4 (was 2)

Now, C1 will be directed to server B instead of server D, C2 to server C instead of server A, and so on. This change means that we will once again face the problem of missing cache hits, as the new server assignments do not have the previously cached data.

In this simple example, every single client got remapped to a different server. With four servers, adding one server caused 100% of keys to move. In general, with simple modular hashing, adding or removing a single server causes roughly (n-1)/n of all keys to be remapped, where n is the new server count. For a 100-server cluster losing one server, that is about 99% of keys moving. This is the fundamental problem that consistent hashing and rendezvous hashing solve.

How to Solve the Problem of Adding or Removing Servers?

Consistent hashing and rendezvous hashing are two slightly more complex hashing strategies that address the issue of adding or removing servers. Both strategies minimize the number of keys that need to be remapped when a hash table gets resized, which is especially useful for load balancers that distribute traffic to servers, among other uses.

Consistent Hashing

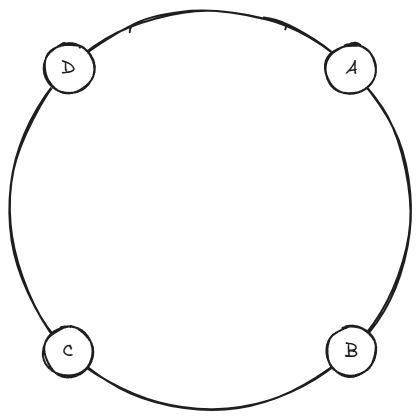

Consistent hashing is a technique that minimizes the number of requests that get forwarded to different servers when new servers are added or existing ones are removed. To better understand how it works, let’s consider an example.

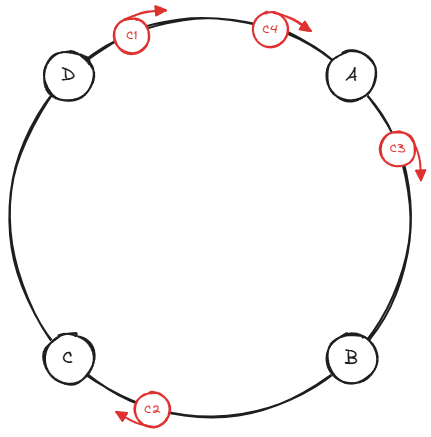

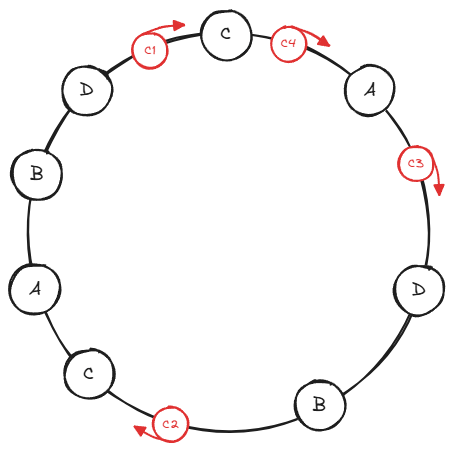

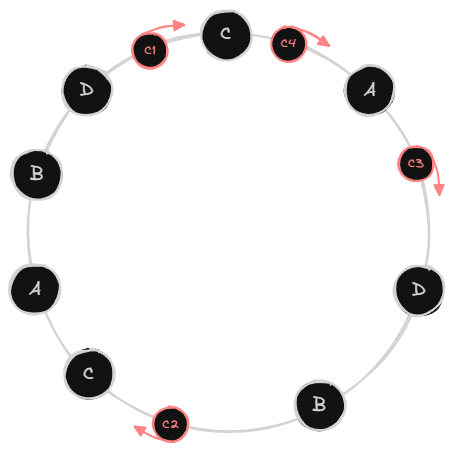

Imagine servers being organized on a circle, which is not a physical object but rather a conceptual representation to help us visualize the consistent hashing strategy. The servers are more or less evenly distributed along the circle, and their placement is determined by the output of a hashing function applied to each server’s name or identifier.

Clients are also placed on the circle using a hashing function, which might take the client’s IP address, username, or some other identifier as input.

To determine which server a request should be routed to, you start from the client’s position on the circle and move clockwise (or counterclockwise) until you encounter a server. That server is where the load balancer will redirect the client’s request.

Consistent Hashing Advantages

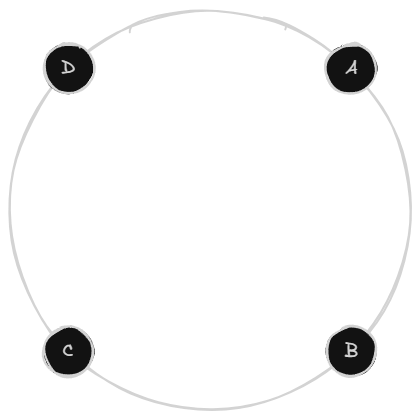

Consistent hashing is advantageous because it maintains most of the previous mappings between clients and servers even when servers are added or removed.

In the previous example with a simple hashing strategy, if a server went down, all calculations would need to be redone. For instance, if there were four servers and one died, the modulus operation would no longer be done by four, but rather by three.

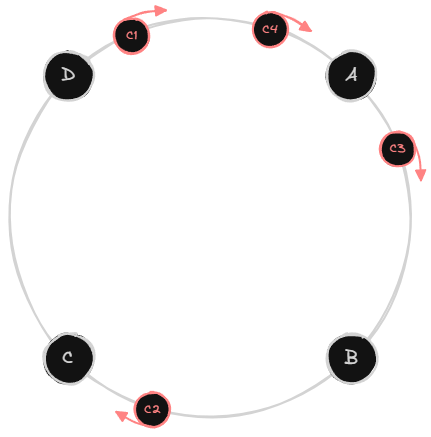

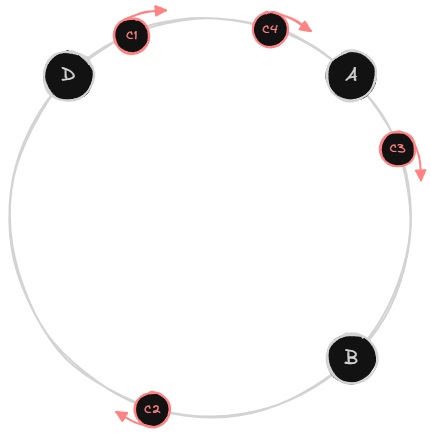

However, with consistent hashing, if a server goes down, let’s say server C, the clients will move in a clockwise direction to find the closest server. For most clients, nothing changes, but since server C is down, C2 will be redirected to server D.

When a server is removed, only the keys that were assigned to that server need to be redistributed (to the next server on the ring). On average, only K/n keys move, where K is the total number of keys and n is the number of servers. Compare that to the K * (n-1)/n keys that move with simple modular hashing.

Consistent Hashing Implementation

The ring can be implemented efficiently using a TreeMap (a sorted map backed by a red-black tree). Each server is hashed to one or more positions on the ring, and we use ceilingEntry() to find the next server clockwise from a given key’s hash position. This gives us O(log n) lookups.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

public class ConsistentHashRing {

private final TreeMap<Integer, String> ring = new TreeMap<>();

private final int numberOfReplicas;

public ConsistentHashRing(int numberOfReplicas) {

this.numberOfReplicas = numberOfReplicas;

}

public void addServer(String server) {

for (int i = 0; i < numberOfReplicas; i++) {

int hash = hash(server + "-vnode-" + i);

ring.put(hash, server);

}

}

public void removeServer(String server) {

for (int i = 0; i < numberOfReplicas; i++) {

int hash = hash(server + "-vnode-" + i);

ring.remove(hash);

}

}

public String getServer(String key) {

if (ring.isEmpty()) {

throw new IllegalStateException("No servers available");

}

int hash = hash(key);

// Find the first server at or after this hash position

Map.Entry<Integer, String> entry = ring.ceilingEntry(hash);

if (entry == null) {

// Wrap around to the first server on the ring

entry = ring.firstEntry();

}

return entry.getValue();

}

private int hash(String key) {

// Using a simple but effective hash for demonstration.

// In production, use MurmurHash3 or xxHash.

int h = key.hashCode();

// Spread bits to reduce clustering

h ^= (h >>> 16);

return h;

}

}

Usage looks like this:

1

2

3

4

5

6

7

8

9

10

11

12

13

ConsistentHashRing ring = new ConsistentHashRing(150);

ring.addServer("server-A");

ring.addServer("server-B");

ring.addServer("server-C");

ring.addServer("server-D");

String server = ring.getServer("client-request-key-123");

// Returns the server responsible for this key

// When a server goes down, only its keys are redistributed

ring.removeServer("server-C");

// Keys that were on server-C now map to the next server clockwise

Virtual Nodes

In cases where you want to distribute the load more evenly across servers, you can pass the servers or server through multiple hashing functions and place the resulting hashes on the circle. This approach allows you to distribute the load more evenly or assign more responsibility to more powerful servers and distribute traffic more fairly.

This concept is called virtual nodes (vnodes). Instead of placing each physical server at a single point on the ring, we place it at multiple points. Each of these points is a virtual node that maps back to the same physical server.

The problem virtual nodes solve is straightforward. With only one point per server on the ring, the distribution is uneven, especially with a small number of servers. One server might own 60% of the ring while another owns only 10%. Virtual nodes smooth this out by giving each server many positions on the ring, so the arcs between positions are smaller and more uniform.

You can see this in the ConsistentHashRing implementation above. The numberOfReplicas parameter controls how many virtual nodes each server gets. A typical production value is 100 to 200 virtual nodes per server. In the addServer method, we create entries like "server-A-vnode-0", "server-A-vnode-1", and so on, each hashed to a different position on the ring.

Virtual nodes also provide a natural way to handle heterogeneous hardware. If server A has twice the capacity of server B, you can give server A 200 virtual nodes and server B 100. Server A will then own roughly twice as much of the ring and receive twice as many requests:

1

2

3

4

5

// Powerful server gets more virtual nodes

ring.addServer("server-A-large", 300);

// Smaller server gets fewer virtual nodes

ring.addServer("server-B-small", 100);

To support this, you would modify addServer to accept a per-server replica count:

1

2

3

4

5

6

public void addServer(String server, int replicas) {

for (int i = 0; i < replicas; i++) {

int hash = hash(server + "-vnode-" + i);

ring.put(hash, server);

}

}

Consistent hashing is powerful because it maintains a level of consistency between hashes and their target buckets, ensuring a more stable relationship between clients (or requests, or IP addresses) and servers or whatever else you’re using.

Rendezvous Hashing

Rendezvous Hashing, or Highest Random Weight (HRW) Hashing, works by assigning each server a weight based on a combination of the server’s identifier and the data item’s key. The server with the highest weight for a given key is chosen to store the corresponding data item. This ensures an even distribution of data items across the available servers, while also providing fault tolerance and minimizing data movement when servers are added or removed.

Here’s how the weight computation works. For each key, we compute a score for every server by hashing the combination of the server name and the key together. The hash function produces a numeric value, and the server with the highest value “wins” that key:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

public class RendezvousHashing {

private final List<String> servers = new ArrayList<>();

public void addServer(String server) {

servers.add(server);

}

public void removeServer(String server) {

servers.remove(server);

}

public String getServer(String key) {

if (servers.isEmpty()) {

throw new IllegalStateException("No servers available");

}

String bestServer = null;

long highestWeight = Long.MIN_VALUE;

for (String server : servers) {

long weight = computeWeight(server, key);

if (weight > highestWeight) {

highestWeight = weight;

bestServer = server;

}

}

return bestServer;

}

private long computeWeight(String server, String key) {

// Hash the combination of server and key

String combined = server + ":" + key;

// In production, use MurmurHash3 or xxHash for better distribution

long h = combined.hashCode();

// Mix bits to improve distribution

h = ((h >> 16) ^ h) * 0x45d9f3b;

h = ((h >> 16) ^ h) * 0x45d9f3b;

h = (h >> 16) ^ h;

return h;

}

}

The key insight is that the weight is deterministic: given the same server name and key, you always get the same weight. This means any node in the system can independently compute the same assignment without coordination.

Here’s an example to illustrate how Rendezvous Hashing works in practice:

Suppose we have four servers (A, B, C, and D) and three IP addresses (IP1, IP2, IP3). To determine which server will handle each IP address, we calculate the weight for each server-IP address combination:

IP1:

- Server A: weight 3.0

- Server B: weight 1.5

- Server C: weight 2.1

- Server D: weight 1.9

Server A has the highest weight for IP address IP1, so it will be responsible for handling IP1.

IP2:

- Server A: weight 1.1

- Server B: weight 2.7

- Server C: weight 0.5

- Server D: weight 1.8

Server B has the highest weight for IP address IP2, so it will be responsible for handling IP2.

IP3:

- Server A: weight 2.2

- Server B: weight 1.9

- Server C: weight 3.5

- Server D: weight 0.7

Server C has the highest weight for IP address IP3, so it will be responsible for handling IP3.

Now let’s say server C goes down. To redistribute the IP addresses, we only need to recalculate the weights for server C’s IP addresses (in this case, IP3).

IP3 (recalculated without server C):

- Server A: weight 2.2

- Server B: weight 1.9

- Server D: weight 0.7

Server A now has the highest weight for IP address IP3, so it will be responsible for handling IP3.

The other IP addresses remain assigned to their original servers, minimizing the amount of redistribution required.

This is the core strength of rendezvous hashing: when a server is removed, only the keys that were assigned to that server move, and they each independently find the next-best server based on the remaining weights. No ring structure is needed, no virtual nodes, just a simple loop over all servers for each key.

Consistent Hashing vs Rendezvous Hashing: When to Use Each

Both consistent hashing and rendezvous hashing solve the same core problem, minimizing key redistribution when servers are added or removed, but they differ in their trade-offs.

Prefer consistent hashing when you need O(log n) lookup time with a large number of nodes. Consistent hashing uses a ring structure with virtual nodes, making it a natural fit for systems like distributed caches (e.g., Memcached, Amazon DynamoDB) where fast lookups are critical and the node count is high.

Prefer rendezvous hashing when simplicity and uniform distribution matter more than raw lookup speed. Rendezvous hashing requires computing a score for every server on each lookup (O(n) per key), but it produces a more even distribution without needing virtual nodes. It works well when the number of servers is moderate and you want a straightforward implementation with no additional data structures to maintain.

In practice, consistent hashing dominates in large-scale production systems due to its logarithmic lookup, while rendezvous hashing is favored in smaller clusters or when you want deterministic, easy-to-reason-about placement without ring management overhead.

References

- David Karger et al., “Consistent Hashing and Random Trees: Distributed Caching Protocols for Relieving Hot Spots on the World Wide Web,” ACM Symposium on Theory of Computing (1997)

- Daniel Thaler and Chinya Ravishankar, “Using Name-Based Mappings to Increase Hit Rates,” IEEE/ACM Transactions on Networking 6, no. 1 (1998)

Related posts: Load Balancing, Hash Tables, Replication and Sharding, Caching